The Capybara That Changes Everything: Claude Mythos and the End of AI Complexity

A security researcher just uploaded a popular GitHub repository to Anthropic’s latest model. Within minutes, the AI found multiple zero-day vulnerabilities that thousands of human developers had missed over years of active development. The repo? Ghost, with over 50,000 stars and a massive security-conscious community. The model? Claude Mythos, internally codenamed “Capybara,” and it represents the kind of capability leap that makes your current AI workflows obsolete overnight.

This isn’t another incremental model update. Mythos is the first confirmed model trained on NVIDIA’s GB300 chips, and early reports suggest it’s about to flip the script on how we build AI systems. The twist? Everything you’ve carefully engineered to work around AI limitations now works against you. Welcome to the bitter lesson of building with LLMs: the smarter the model gets, the dumber your prompts should become.

The Bitter Lesson: Your Scaffolding Is Now Scaffolding

Here’s the uncomfortable truth about AI development. We’ve spent two years building elaborate prompt architectures, complex retrieval systems, and multi-step workflows because models needed our help. They couldn’t remember context, couldn’t reason through complex problems, and needed their hands held through every decision. So we became AI babysitters, writing 3,000-token customer support prompts that spelled out every possible scenario.

Mythos changes that equation fundamentally. The model that needed training wheels? It just graduated to a motorcycle. And all that protective scaffolding you built? It’s now dead weight.

The bitter lesson works like this: every piece of complexity you added to compensate for model limitations becomes friction when those limitations disappear. That detailed step-by-step reasoning you embedded in prompts? The model can do better reasoning on its own. Those careful retrieval constraints you programmed? The model can navigate information spaces more intelligently than your rules allow.

It’s like teaching someone to drive by giving them turn-by-turn directions to the grocery store, then wondering why they can’t navigate when the GPS is right there on the dashboard.

Four Systems That Need Immediate Surgery

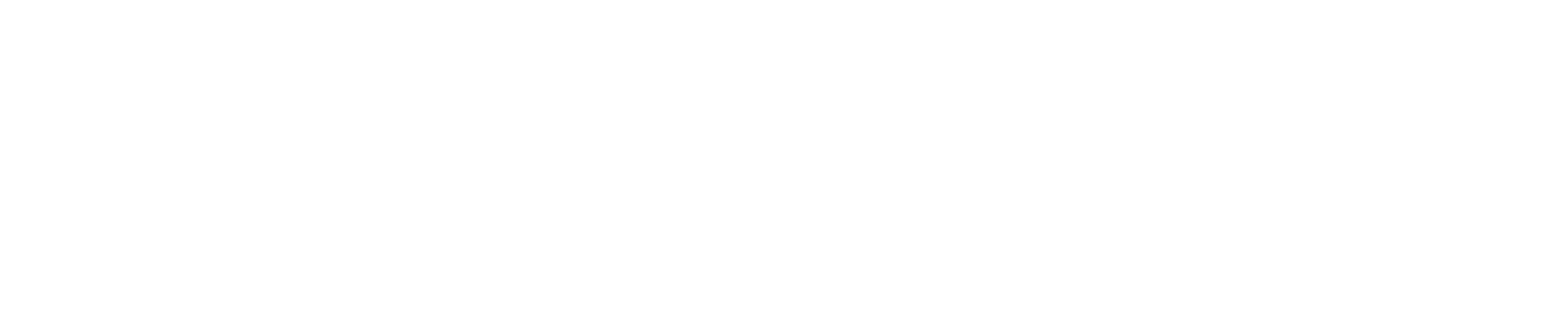

The shift to Mythos-level capability requires rethinking four core areas where we’ve over-engineered for limitations that no longer exist.

Prompt Architecture Gets the Guillotine

Start auditing every instruction with one question: “Is this here because the model needs it, or because I needed the model to need it?” Early Mythos users report cutting prompt complexity by 30-50% while seeing better results. That customer support prompt that carefully explains tone, escalation procedures, and edge cases? Try: “Resolve this customer’s issue using our knowledge base while maintaining our brand voice.” The model infers the rest from context better than your explicit instructions ever could.

Retrieval Systems Learn to Trust

Instead of programming exactly what the model should retrieve and when, provide well-organized, searchable repositories and let the model figure out what it needs. The large context windows combined with genuine reasoning capability mean you can load massive amounts of relevant information and trust the model to find the signal in the noise. Your job shifts from controlling retrieval to curating excellent source material.

Domain Knowledge Becomes Contextual

Business rules, house styles, and procedural knowledge that you’ve been hard-coding into prompts? Models at Mythos level infer these patterns from examples and context more reliably than following your explicit rules. One early user reported reducing a complex 10-line research prompt to a single line with better results. The model learned their research style and quality standards from the work context itself.

Evaluation Becomes Comprehensive

Instead of multiple checkpoints and intermediate evaluations, move toward single, comprehensive assessment at the end. For software development, this means one evaluation gate that checks everything: functionality, security, performance, style. For other work, maintain the same high standards but expect near-99% accuracy on complex tasks. The model can handle the full complexity of real-world evaluation criteria.

Building for the Step Change

Preparing for Mythos means redesigning systems around outcome specifications rather than process documentation. Instead of telling the AI how to think, focus on clearly communicating what you want and why it matters. Establish business constraints and guardrails that will survive model upgrades, but let the reasoning and methodology adapt.

The most successful early implementations focus on excellent tool definitions. Give the model access to the right systems and databases, define clear boundaries around what it can and cannot do, then trust it to coordinate its own workflow. Think less like a micromanaging supervisor and more like an executive setting strategic direction.

This includes preparing for multi-agent coordination where the model acts as a planner, spinning up specialized instances for different tasks while maintaining overall project coherence. The architecture shifts from human-controlled workflows to AI-coordinated systems with human oversight at key decision points.

The Economics of Excellence

Mythos will likely cost around $200 per month initially, restricted to premium plan subscribers. Before dismissing the price, consider what you’re currently spending on Netflix, Spotify, various streaming services, and subscription software. The competitive advantage of accessing cutting-edge AI capability often exceeds the cost of entertainment subscriptions by orders of magnitude.

For healthcare organizations evaluating these premium models, the implications extend beyond general productivity. Enhanced capability in medical literature analysis, clinical decision support, and regulatory compliance documentation could transform workflows that currently require extensive human review and validation. The key is identifying use cases where the step-change in capability creates proportional value.

Security markets reportedly dropped 5-9% when Mythos capabilities leaked, suggesting institutional recognition of the disruption potential. Companies need multi-model strategies that distinguish when to use premium versus standard models, optimizing cost while capturing capability advantages where they matter most.

Catching the Capability Train

The window for preparation is weeks, not months. Mythos is expected within 1-2 months, and organizations that adapt quickly will establish competitive advantages before broader market adoption. Start auditing your current AI implementations now. Identify over-engineered prompts, complex retrieval logic, and multi-step workflows that could be simplified.

The companies that thrive in this transition will be those that learn to step back and let intelligence emerge rather than trying to control every aspect of AI reasoning. It’s the difference between conducting an orchestra and teaching someone to play every instrument yourself. Mythos gives you musicians who can read the music. Your job is composing the symphony, not explaining how to hold the bow.

The inflection point isn’t coming. It’s here. The question is whether you’ll simplify fast enough to catch it.