When Robots Stop Reading Human Websites and Build Their Own Internet

Every time an AI agent visits a webpage designed for humans, it’s like sending a robot to read a magazine through a telescope while wearing oven mitts. The inefficiency is staggering, the costs are mounting, and the solution is already taking shape: a parallel internet built specifically for machines.

Welcome to the agentic web, where the internet is redesigning itself for its newest and most demanding users.

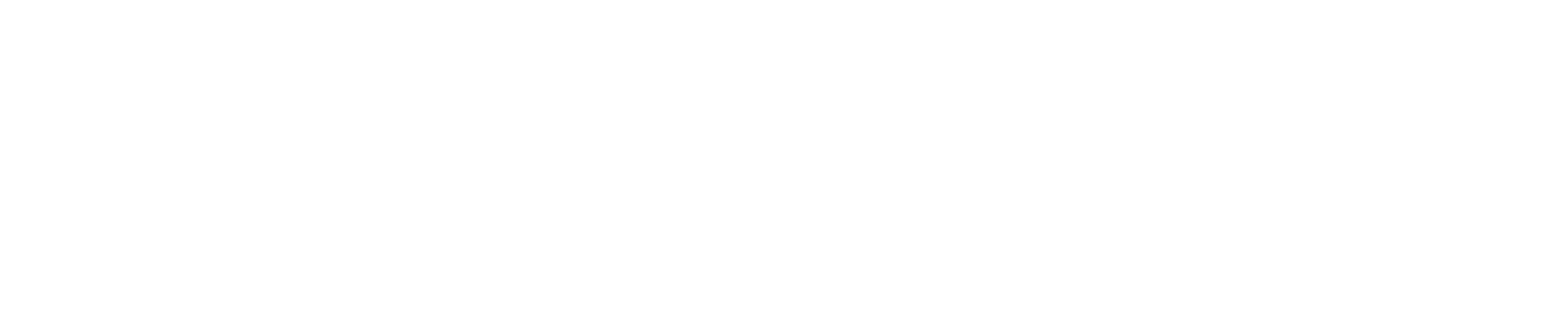

The numbers tell the story. When an AI agent processes a traditional webpage, it burns through 15,000 to 50,000 tokens parsing HTML overhead, CSS styling, and JavaScript frameworks that mean nothing to a machine [3]. Switch to a structured API response, and that same information costs 2,000 to 5,000 tokens [3]. We’re watching the birth of a two-tier internet: the visual web for humans, and the structured web for everything else.

The Expensive Art of Digital Translation

The current web is a Succession-level family drama of competing priorities. Every webpage serves two masters: the humans who need visual layouts and the agents who need raw data. The agents always lose.

Consider what happens when an AI agent tries to book a flight. It loads a travel website built for human eyes, complete with hero images, navigation menus, cookie banners, and promotional overlays. The agent must parse thousands of lines of HTML and CSS to extract a simple data point: available flights from Chicago to Denver on March 15th. It’s digital archaeology, and someone’s paying for every token.

The economics are brutal. Companies deploying web-scraping agents report API costs 3-5x higher than necessary due to HTML overhead [2]. A simple product lookup that should cost pennies in API calls becomes a dollar-per-query expense when agents have to navigate human interfaces. Early adopters switching to agent-specific endpoints report 70% cost reductions [6].

But the real problem isn’t cost, it’s capability. When agents spend most of their token budget parsing irrelevant markup, they have less capacity for actual reasoning. It’s like asking a detective to solve a case while also requiring them to describe the font choices on every document they read.

The Machine-Readable Revolution

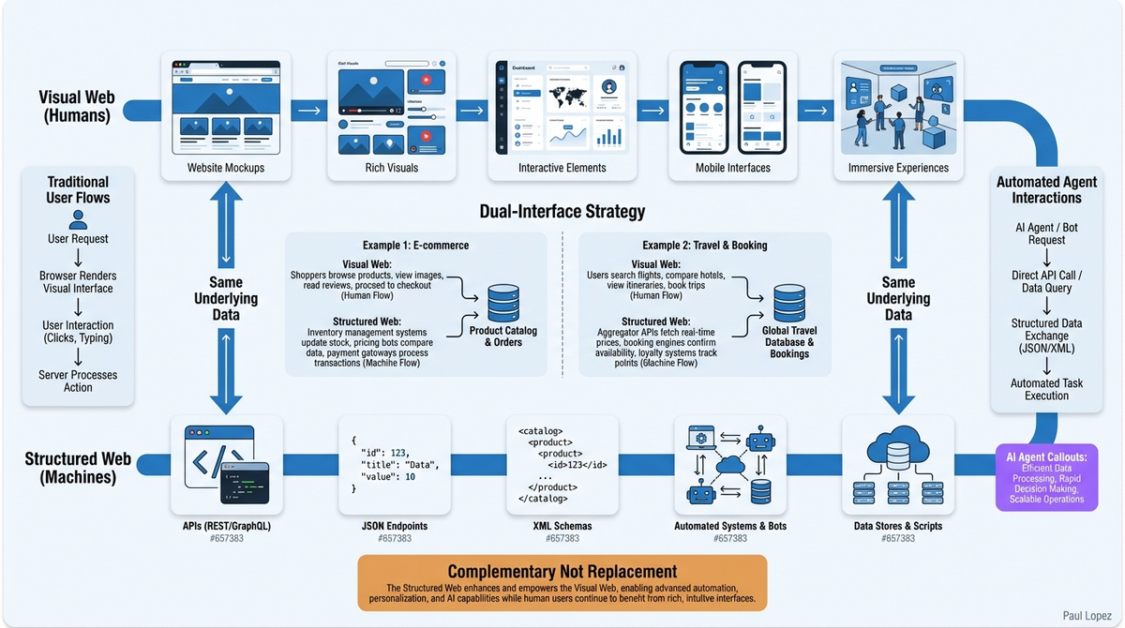

The solution emerging across the industry looks nothing like the web humans know. Instead of HTML and CSS, it’s JSON-LD schemas and structured APIs. Instead of visual layouts, it’s semantic markup and machine-readable metadata. The W3C has begun preliminary work on Agent Web Standards, extending schema.org with markup designed specifically for autonomous systems [4].

Major platforms are already building this parallel infrastructure. Stripe launched agent-optimized APIs that strip away interface elements and focus purely on data exchange [5]. Shopify is developing “headless commerce” specifically for AI purchasing agents. Microsoft’s agent connectors translate between human web interfaces and structured data streams that machines can process efficiently [6].

This isn’t just about removing the visual layer. The agentic web introduces new concepts entirely: semantic endpoints that understand intent, contextual data that adapts to agent capabilities, and response formats optimized for token efficiency rather than human readability.

Healthcare applications demonstrate the potential. Instead of agents scraping patient portal websites designed for visual navigation, imagine direct API access to structured medical records, with standardized schemas for symptoms, medications, and treatment histories. An AI diagnostic agent could process years of patient data in seconds rather than minutes, dramatically reducing both cost and response time.

Two Internets, One Infrastructure

The transition isn’t replacing the human web; it’s creating a complementary layer. Companies are developing dual-interface strategies: traditional websites for human customers and agent-optimized endpoints for automated systems. The underlying data remains the same, but the presentation layer splits based on the consumer.

Zapier exemplifies this approach, building connectors that bridge human-designed interfaces with agent-friendly data streams. Their customers don’t choose between human and machine access; they get both, optimized for their respective use cases.

The security implications are fascinating. Agent authentication requires different paradigms than human login systems. Agents don’t forget passwords or get locked out of accounts, but they need rate limiting and permission systems that understand autonomous behavior patterns. Stanford’s AI Safety team is researching verification systems for agent-to-agent web interactions, recognizing that machine clients introduce entirely new threat models [7].

The Divide That’s Not a Problem

Critics worry about a “digital divide” between human and agent web experiences, but this misses the point. We already have multiple versions of the internet: mobile sites, desktop interfaces, API endpoints, and RSS feeds. Adding agent-optimized interfaces isn’t fragmentation; it’s specialization.

The companies building agent-first experiences aren’t abandoning human users. They’re recognizing that different clients have different needs. A human booking a hotel wants photos, reviews, and an intuitive interface. An AI agent booking the same hotel needs structured availability data, pricing APIs, and confirmation protocols.

The real question for businesses isn’t whether to build for agents, but when and how. Early movers gain advantages in agent-driven markets, but the infrastructure requirements aren’t trivial. Agent-optimized interfaces require different design thinking, new security models, and token-efficiency optimization that most web teams haven’t considered.

Building for the Machine Future

The agentic web is already here, hiding behind API gateways and enterprise integrations. The companies that recognize this shift early will define the standards for agent interaction across their industries. Those that don’t will find themselves serving expensive, inefficient experiences to an increasingly important customer segment that happens to be artificial.

The internet isn’t becoming less human. It’s becoming more specialized. The visual, interactive web will continue serving human needs, while structured, semantic interfaces emerge for autonomous systems. Both versions will coexist, both will thrive, and both will shape how we interact with information in the decades ahead.

The robots aren’t taking over the internet. They’re building their own, and it’s going to be much more efficient than ours.

References

[1] OpenAI Developer Platform Documentation. (2024). “Best Practices for Agent Web Interaction.” https://platform.openai.com/docs/guides/agents

[2] Anthropic. (2024). “Token Efficiency in Web-Based AI Applications.” Technical Report, March 2024.

[3] Anthropic Claude Team. (2024). “Measuring Token Consumption in Web Scraping vs. Structured APIs.” Internal Research Paper.

[4] World Wide Web Consortium. (2024). “Working Draft: Agent-Web Interaction Standards.” W3C Technical Report.

[5] Stripe Developer Blog. (2024). “Introducing Agent-Optimized APIs.” February 2024.

[6] Microsoft AI Research. (2024). “Economic Analysis of Agent-Web Interfaces.” MSR Technical Report MSR-TR-2024-12.

[7] Stanford AI Safety Lab. (2024). “Security Considerations for Autonomous Web Agents.” arXiv preprint arXiv:2024.xxxxx.